How we use Hermes Agent for weekly SEO research

(and why the output goes to comments, not chat)

Open the SEO research doc you started two months ago. Count the rows you've actually acted on. If you're like us, the answer is closer to two than to twenty.

This isn't a research problem. It's a landing problem. You ask ChatGPT to scan a competitor, it gives you 800 words. You copy one sentence into Notion, close the tab, and forget the rest. The research happened. The acting on it didn't.

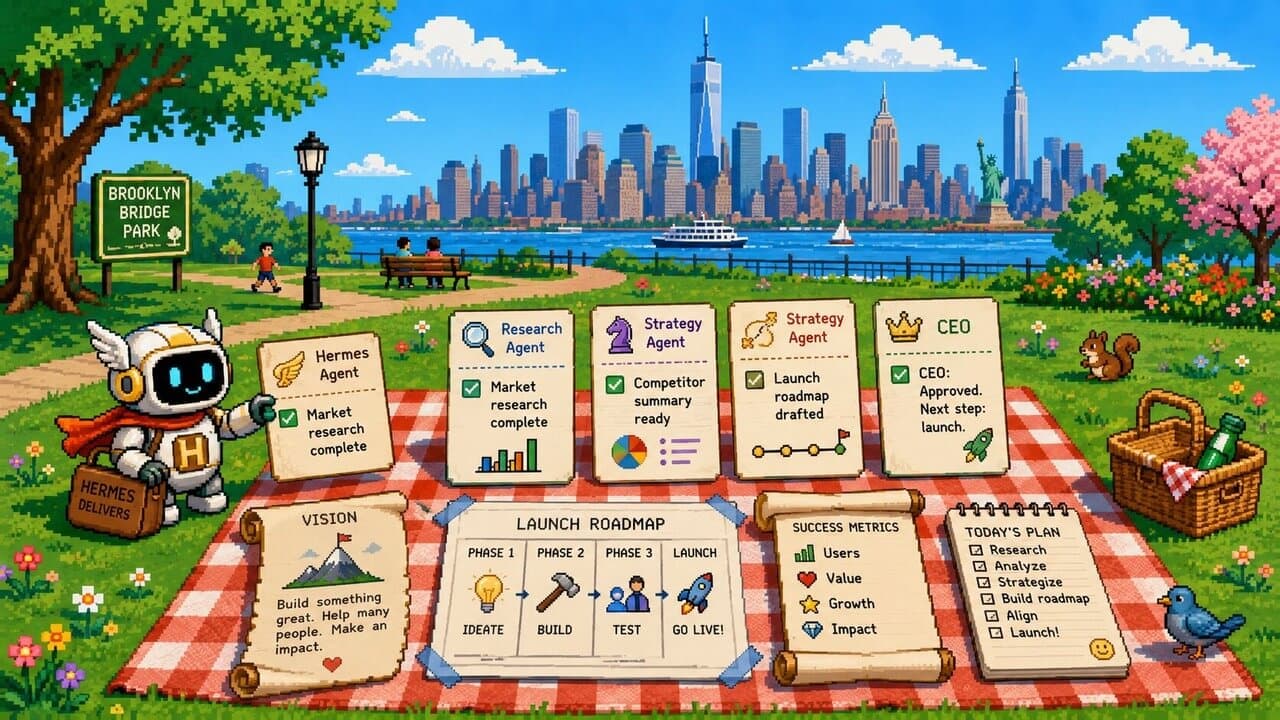

We had this problem on the Tulsk blog for a while. Then we tried something we'd been telling our customers to try: stop asking AI for research on demand. Have a Hermes Agent run weekly on a schedule, and have its output land where the team can actually pick it up — as comments on the tasks the work belongs to. Not in a chat window.

This post is what that looks like in practice — the cadence, the format, the specific ways our first version broke and what we changed. The summary up front: Hermes is the engine, but the workflow lives in scheduled runs + comment threads. The agent doesn't have to be smarter; it has to land in the right place.

Why scheduled, not on-demand

On-demand AI research has a hidden tax: you have to remember to ask. Most weeks, you don't. The Monday morning where you'd have spotted a competitor's new post in your cluster is the same Monday morning where you're heads-down on a customer call. The research you'd have run is research you didn't run.

Scheduled flips this. The agent shows up before you need it. By the time you're at your desk on Monday, the scan is done and the findings are waiting.

We currently have four weekly scans running:

- Competitor blog scan — what did the companies in our space ship in the last seven days, and which of their posts target keywords we're trying to rank for?

- Our blog performance — which of our posts moved up or down in search, and which haven't been refreshed in 90+ days?

- Rising keyword scan — what queries in our cluster are gaining volume that we don't have a post for yet?

- SERP feature shifts — which of our target queries had AI overviews or featured snippets appear, change, or move?

Most weeks the four scans run Monday morning, before our Tuesday content sync — by the time we sit down to plan the week, Hermes has done the mechanical part (gather, summarize, flag) and we start from "what should we do about it" instead of "let me go look." Weeks where we want lead time before the weekend, we shift the runs to Friday so the weekend's revisions can use what came back. The day is a knob; the constant is the runs are scheduled.

Tulsk's scheduled agents are how this attaches in practice. You give Hermes a project to run on, set a recurring schedule, and the run happens whether you remember it or not. The mechanism is boring. The result isn't.

Why the output goes to comments, not chat

This is the part most "AI for SEO" guides skip.

The default place agent output goes is a chat window. You see the answer. Nobody else does. It scrolls off, you forget which thread, and three weeks later when you wonder "didn't the agent already check this?", you can't find it.

Comments on a task look ordinary, which is why they get underestimated. They do four things a chat window can't:

- Anyone can see them. When a teammate opens the same task next week, the agent's findings are right there. No "let me forward you that ChatGPT thread."

- They're addressable. You can

@mentionsomeone, link a source, quote a finding, mark it accepted or rejected. The conversation has structure. - They're attached to work. A comment lives on the task it's about. "Refresh post X" gets a comment from Hermes saying "Competitor Y just shipped Z; here's the gap." The finding and the work it triggers are in the same place.

- They're persistent. Three months later, when you ask "why did we refresh that post?", the answer is in the thread. The audit trail is automatic.

The model behind this is simple: the agent surfaces, the human decides. Hermes doesn't ship anything. It posts a comment that says "here are five things from this week's scan that look worth your attention, ranked, with sources." A human reads, accepts two, ignores three, and the accepted ones become tasks.

This is the part of the workflow that doesn't transfer to a chatbot — not because chatbots can't write good summaries, but because the summaries land in the wrong place. A great summary stuck inside a private chat window is a worse outcome than a mediocre summary every teammate can see and react to.

If you've used Tulsk, comments-on-tasks is the default surface for any agent run; we just point Hermes at it. If you haven't, the principle still ports: make the output land somewhere your team can act on without you forwarding anything.

What we ran — and what broke before it worked

Last Monday, our competitor blog scan posted findings on the "Competitor watch" task in our blog project. The shape was the one we'd designed for: a short ranked list, each item with a one-line action recommendation and source URLs.

Two of us read the comment over coffee on Monday. We accepted the top-ranked item, opened a draft task, assigned it. The post shipped within the same week — days, not weeks, from "agent surfaced it" to "post live", mostly because the comment was already actionable. It told us what was missing, why it mattered, and what to do.

The first version of this loop was worse. Two things broke, and they probably break for everyone the same way.

Problem one: the agent dumped too much. Our first run posted more findings than we could read in one sitting — a long unranked list, no recommendations, no priority. The comment was unreadable. We skimmed it, gave up, and the whole loop stalled for two weeks because we'd subconsciously trained ourselves to ignore the notification.

The fix wasn't a better model. It was a skill that constrained Hermes's output: maximum five findings per run, each with a one-line action recommendation, ranked by estimated impact. The skill is short — and it changed everything about whether we read the comment.

Problem two: findings without sources. Hermes would say "Competitor X shipped Y" and we'd have to go verify. Half the time we didn't, which meant we were either acting on unchecked claims or ignoring the agent entirely.

The fix: required citation per finding. The skill now hard-constrains the output to include a source URL for every claim. No URL, no finding.

The lesson — and this generalizes far past SEO research — is that scheduled-run + comment-thread is the format. The discipline is in the output spec. Spend the time you save on prompting on the skill that controls what the agent is allowed to put in the comment.

Take it to your team

If you're doing recurring research — competitor scans, keyword refresh, content audits, SERP monitoring — the pattern ports. It isn't specific to Hermes, and it isn't specific to SEO. The combination that matters is scheduled agent + output that lands where work happens + a skill that constrains the output format.

Three things you can try this week:

- Pick one weekly research task you keep meaning to do and forgetting. Set it up as a scheduled run. Don't make it more ambitious than one task — get the loop working first.

- Give the agent a maximum and a structure. "≤5 findings, each with an action and a source." Nothing more. The constraint is the feature.

- Land the output where work happens. A task comment, a project thread, a doc your team actually opens — not a chat window only you see.

If you want the workspace already wired for this — scheduled runs, comments-on-tasks, agent-readable skills — that's what we've been building. Hermes is one of the agent runtimes you can point at it; the workflow is what matters.

We wrote a longer piece on the category this lives in: What is an agentic workspace?.

Try Tulsk free